Wearable devices have changed what continuous health measurement looks like. A patient or research participant can now contribute weeks of behavioral and physiological data without ever stepping into a clinic. For the field of digital health, that shift is genuinely transformative.

But the strength of a research platform isn’t only in how much data it can collect. It’s in knowing which signals are trustworthy enough to support a scientific claim, and which ones still need to be treated with caution. Among the metrics that consumer wearables produce, energy expenditure is one of the clearest examples of a measurement that often gets used with more confidence than the evidence supports.

What the validation literature actually says

When researchers compare wearable-estimated calorie burn against laboratory reference standards like indirect calorimetry or doubly labeled water, the results are surprisingly poor and surprisingly consistent.

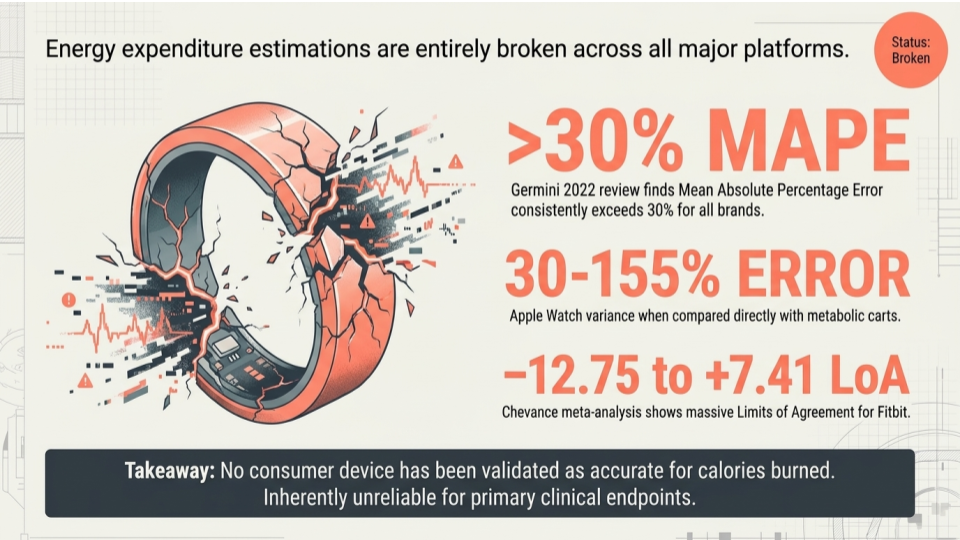

A widely cited systematic review by Germini and colleagues at McMaster University, published in the Journal of Medical Internet Research in 2022, looked at 65 studies covering 72 devices across 29 brands. Across the board, energy expenditure showed Mean Absolute Percentage Error above 30%. The authors concluded that none of the tested devices proved accurate for measuring energy expenditure. Step counts and heart rate, by contrast, performed considerably better for several leading devices.

A meta-analysis by Chevance and colleagues, also published in 2022 in JMIR mHealth and uHealth, focused specifically on Fitbit devices. Their pooled limits of agreement for energy expenditure ranged from roughly negative 12.75 to positive 7.41, meaning a single Fitbit-derived estimate could be off in either direction by an amount large enough to swamp any plausible intervention effect.

These aren’t outlier findings from a single study or a single brand. They are convergent results from multiple independent reviews, and they have not changed meaningfully in the years since.

Why the error is so large

Wrist-worn devices estimate energy expenditure indirectly. They combine accelerometer signals, photoplethysmography-based heart rate, and proprietary algorithms that incorporate user demographics. Each of those inputs introduces its own error, and the relationship between them and true caloric output varies by activity type, intensity, body composition, and skin characteristics.

Steady-state walking on flat ground is a relatively forgiving condition. Most other activities, especially anything involving upper-body work, resistance training, cycling, or interval-style exertion, push the algorithms outside the regimes where they were calibrated. The result is the kind of variance that the validation literature keeps reporting.

This isn’t a knock on the device manufacturers. Estimating energy expenditure from a wrist-worn sensor is a genuinely hard problem. But it does mean that researchers and clinicians need to approach the resulting numbers with appropriate care.

Why this matters for clinical and behavioral research

In a clinical or behavioral trial, the choice of primary endpoint determines what the study can actually prove. If energy expenditure is treated as a primary outcome and the underlying measurement carries 30% or greater error, the study is unlikely to detect a true effect of an intervention even when one exists. Worse, it may produce results that look meaningful but are driven largely by measurement noise.

This concern doesn’t disqualify wearables from clinical research. It clarifies where they belong. Step counts during free-living conditions, heart rate during steady-state activity, sleep timing, and patterns of physical activity over time can all provide useful and reasonably valid signals. Energy expenditure, sleep staging, and several other algorithmically derived metrics are better suited to exploratory analyses, descriptive context, or hypothesis generation rather than as endpoints a trial is powered to detect.

The distinction is straightforward but easy to lose sight of when a device produces a clean number on a dashboard. The number looks like a measurement, but its underlying validity may be closer to an estimate with a wide confidence band that the user never sees.

Reading wearable data with appropriate care

For researchers and clinicians designing studies that incorporate wearables, a few practical questions can help clarify whether a given metric is ready to carry the weight being placed on it.

The first is whether validation evidence exists for the specific device, the specific metric, and the specific population. A Fitbit validated in healthy young adults during treadmill walking tells you very little about how it will perform in older adults during free-living conditions. The second is whether the metric is being used as an absolute value or as a relative within-person change over time. Many wearable signals that are unreliable in absolute terms can still be useful for tracking change within an individual, where systematic bias cancels out. The third is whether the metric is serving as a primary endpoint, a secondary outcome, or contextual data, and whether the study design and interpretation reflect that role honestly.

None of these questions are unique to wearables. They are the same questions that any thoughtful researcher would ask about any measurement instrument. What’s distinctive about wearables is how easily their polished interfaces can obscure the underlying uncertainty, and how tempting it is to treat the resulting data as more definitive than it is.

A brief closing thought

Wearables are a remarkable tool for digital health research, and the field is still in the early innings of understanding how to use them well. Part of using them well is knowing where the validation evidence is strong, where it is weak, and where it is still being built. Energy expenditure is a useful reminder that even mature consumer technologies can have significant gaps between what they appear to measure and what they actually measure with sufficient accuracy for science.

Naming those gaps openly, and designing studies around them, is how the field moves forward.

References

Germini F, Noronha N, Borg Debono V, Abraham Philip B, Pete D, Navarro T, Keepanasseril A, Parpia S, de Wit K, Iorio A. Accuracy and Acceptability of Wrist-Wearable Activity-Tracking Devices: Systematic Review of the Literature. Journal of Medical Internet Research. 2022;24(1):e30791. doi:10.2196/30791

Chevance G, Golaszewski NM, Tipton E, Hekler EB, Buman M, Welk GJ, Patrick K, Godino JG. Accuracy and Precision of Energy Expenditure, Heart Rate, and Steps Measured by Combined-Sensing Fitbits Against Reference Measures: Systematic Review and Meta-Analysis. JMIR mHealth and uHealth. 2022;10(4):e35626. doi:10.2196/35626