A practitioner’s guide for researchers designing studies with consumer sleep trackers.

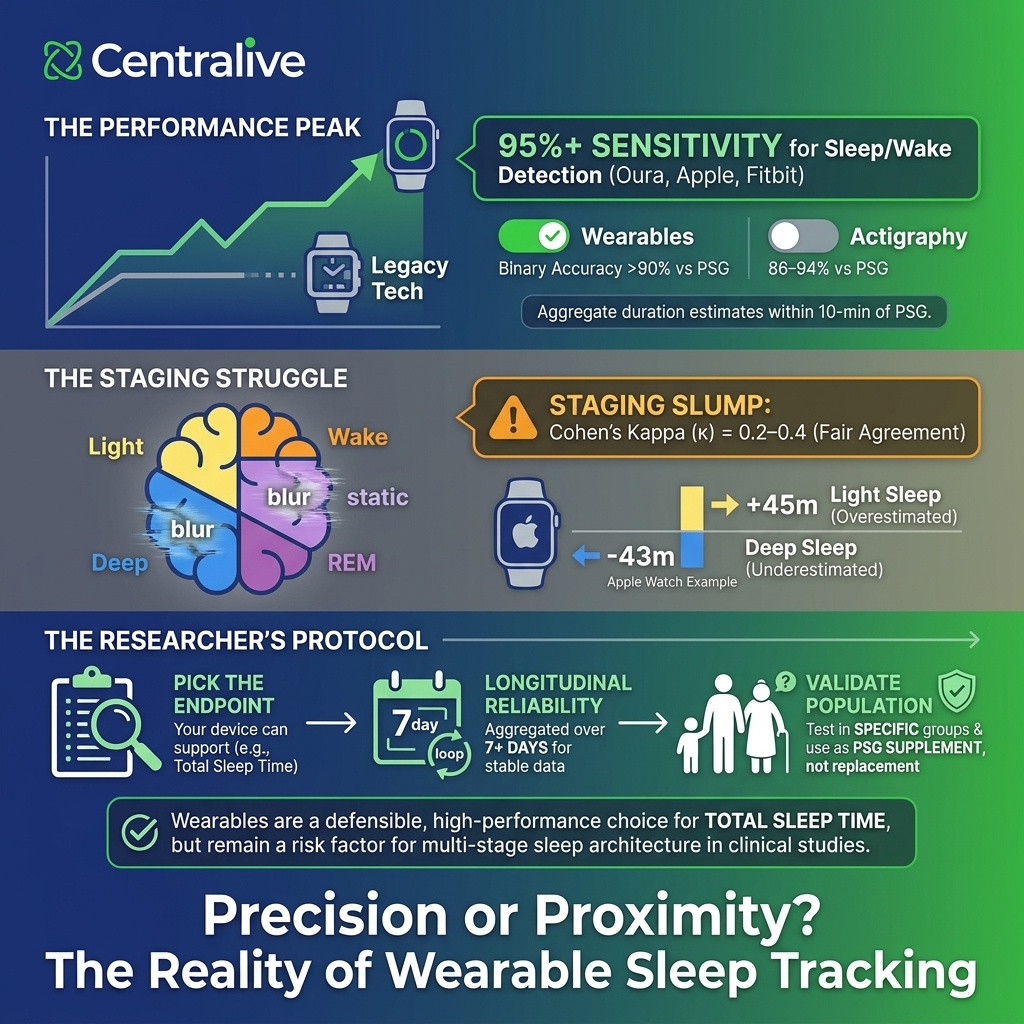

Consumer wearables have become a fixture of digital health research. We use them in our own platform for ambulatory monitoring, EMA-anchored studies, and just-in-time interventions. But the question we hear most often from collaborators isn’t whether to use them — it’s what they can actually measure. Sleep is one of the trickiest cases. The marketing language conflates sensitivity with accuracy, and a single chart of “95% accuracy” can quietly justify a study design the data won’t support.

This post is a short field guide for researchers planning to use Oura, Fitbit, Apple Watch, or similar devices to study sleep. The goal is to separate what’s well-validated from what’s directional, and to flag where study designs commonly overreach.

Where the devices genuinely perform well

In healthy adults under controlled conditions, modern consumer wearables do detect sleep versus wake at performance levels that exceed older research-grade actigraphy. The most-cited 2024 single-night PSG validation of Oura Gen3, Fitbit Sense 2, and Apple Watch Series 8 (Robbins et al., Sensors) reported sensitivity ≥95% across all three devices for the binary sleep–wake decision, with two-stage agreement above 90%. For comparison, traditional actigraphy typically lands in the 86–94% range.

For aggregate sleep duration, the same study found mean nightly estimates within roughly 10 minutes of PSG for all three devices. That is genuinely useful for studies that care about cohort-level trends in total sleep time, sleep timing, and longitudinal change within participants.

Practical takeaway: if your endpoint is sleep duration, sleep timing, or sleep regularity at the group level, consumer wearables are a defensible measurement choice — particularly when the alternative is participant-burdensome PSG or no measurement at all.

Where the picture gets more complicated

The story shifts substantially once you move from “is the participant asleep?” to “what stage of sleep are they in?”

In four-stage classification (wake, light, deep, REM), the same Robbins et al. cohort produced Cohen’s kappa values of 0.65 for Oura, 0.60 for Apple Watch, and 0.55 for Fitbit. These represent moderate to substantial agreement, but they are achieved under near-best-case conditions: a single night of in-lab recording in healthy adults aged 20–50 with no sleep disorders, scheduled 8-hour sleep opportunities, and a study funded by one of the device manufacturers.

The picture in independent multicenter work is meaningfully worse. Lee et al. (2023, JMIR mHealth and uHealth) validated 11 consumer trackers against PSG across multiple sites and found Apple Watch 8 and Oura Ring 3 in the fair-agreement range (κ = 0.2–0.4) for stage classification, with Fitbit Sense 2 reaching moderate agreement (κ = 0.4–0.6). A separate six-device validation in SLEEP Advances reported kappas from 0.21 to 0.53 across Garmin, Withings, Whoop, Apple, and Fitbit devices, with specificity for wake detection between 29% and 52% — meaning the devices over-call sleep when participants are quietly awake.

Stage-specific bias is also non-trivial. In the Robbins study, Apple Watch overestimated light sleep by 45 minutes per night and underestimated deep sleep by 43 minutes; Fitbit overestimated light sleep by 18 minutes and underestimated deep sleep by 15 minutes. Concordance for deep sleep and REM at the individual-night level was poor across all devices (ICCs 0.13 to 0.37).

Practical takeaway: single-night consumer wearable estimates of sleep architecture are not interchangeable with PSG. They are usable for tracking longitudinal change at the participant level, but they are not appropriate as a primary endpoint for studies that depend on accurate stage classification — including most clinical trials targeting sleep disorders.

Quick reference: what to trust at what level

| Sleep measure | What wearables do well | Where they fall short |

|---|---|---|

| Sleep vs. wake | Sensitivity ≥95% in healthy adults; surpasses many older actigraphs. | Specificity (detecting wake) is much weaker; quiet wakefulness is often misclassified as sleep. |

| Total sleep time | Within ~10 minutes of PSG on a single night for healthy adults. | Bias grows in fragmented sleep, shift workers, and clinical populations. |

| Sleep efficiency / WASO | Group-level estimates are reasonable for tracking change over time. | Individual-night estimates can diverge widely; ICCs are fair to good, not excellent. |

| Four-stage architecture | Better than chance; useful for trend detection at the cohort level. | Cohen’s kappa typically 0.5–0.65 in best-case studies, 0.2–0.4 in multicenter validation. Not a substitute for PSG-staged research endpoints. |

| Deep sleep (N3) | Trends across many nights may be informative. | Apple Watch underestimated by ~43 min; Fitbit by ~15 min vs. PSG. ICCs were poor (0.13–0.36). |

| REM sleep | Sensitivity reaches 70–80% on best devices. | Concordance with PSG is poor at the individual-night level (ICCs 0.13–0.37). |

Implications for study design

Three patterns come up repeatedly in our consultations with researchers using wearables for sleep, and each has a defensible response.

1. Pick the endpoint your device can actually support. If the study question is about sleep duration or sleep regularity, consumer wearables are a reasonable primary measure. If it depends on REM percentage, deep sleep duration, or differentiating fragmented from consolidated sleep, plan for PSG, ambulatory EEG, or a validated research-grade alternative.

2. Validate in your own population. Most published validation work uses healthy young-to-middle-aged adults. Performance in older adults, adolescents, shift workers, and people with sleep disorders is consistently worse and less well-characterized. For clinical populations, a within-study validation substudy against PSG (or ambulatory EEG) for a subset of participants is good practice.

3. Treat night-level architecture estimates with caution. Even when group-level totals look reasonable, individual-night estimates of REM and deep sleep show wide variability. Aggregating across multiple nights — typically a week or more — substantially improves reliability for within-person trends.

A note on funding and selection bias

Many of the most-cited single-device validation studies are sponsored by the device manufacturers, and several have lead authors with disclosed advisory relationships with the companies whose products they validate. This is not by itself disqualifying — the data are public and the methods are sound — but the choice of comparators, populations, and reporting emphasis tends to favor the sponsoring device. Independent multicenter studies generally report lower agreement than industry-supported ones. When citing validation evidence, flagging the funding source is good practice.

The bottom line

Consumer wearables have earned their place in sleep research, but only for the right questions. They are reliable enough to detect sleep versus wake, useful for tracking sleep duration and timing across cohorts and over time, and genuinely valuable for designs that need ambulatory measurement at scale. They are not yet adequate substitutes for PSG when the science depends on accurate sleep stage classification, and they are weaker still in clinical populations where validation evidence is sparse.

At Centralive, we build studies around what the measurement can actually support. If you’re planning a wearable-based sleep protocol and want a sanity check on the design, get in touch — we’re happy to think it through with you.

Selected references

- Robbins, R., Weaver, M.D., Sullivan, J.P., et al. (2024). Accuracy of Three Commercial Wearable Devices for Sleep Tracking in Healthy Adults. Sensors, 24(20), 6532.

- Lee, T., Cho, Y., Cha, K.S., et al. (2023). Accuracy of 11 Wearable, Nearable, and Airable Consumer Sleep Trackers: Prospective Multicenter Validation Study. JMIR mHealth and uHealth.

- Berryhill, S., et al. (2025). A performance validation of six commercial wrist-worn wearable sleep-tracking devices for sleep stage scoring compared to polysomnography. SLEEP Advances.

- de Zambotti, M., Goldstein, C., Cook, J., et al. (2023). State of the science and recommendations for using wearable technology in sleep and circadian research. Sleep, 47, zsad325.

- Chinoy, E.D., Cuellar, J.A., Jameson, J.T., Markwald, R.R. (2022). Performance of Four Commercial Wearable Sleep-Tracking Devices Tested Under Unrestricted Conditions at Home in Healthy Young Adults. Nature and Science of Sleep, 14, 493–516.