If you wear a Garmin, Apple Watch, Fitbit, or Whoop, there’s a good chance you’ve glanced at its VO2max estimate and either felt smug or slightly insulted. That number — the gold-standard measure of cardiorespiratory fitness — has become one of the most prominent metrics on the consumer wrist.

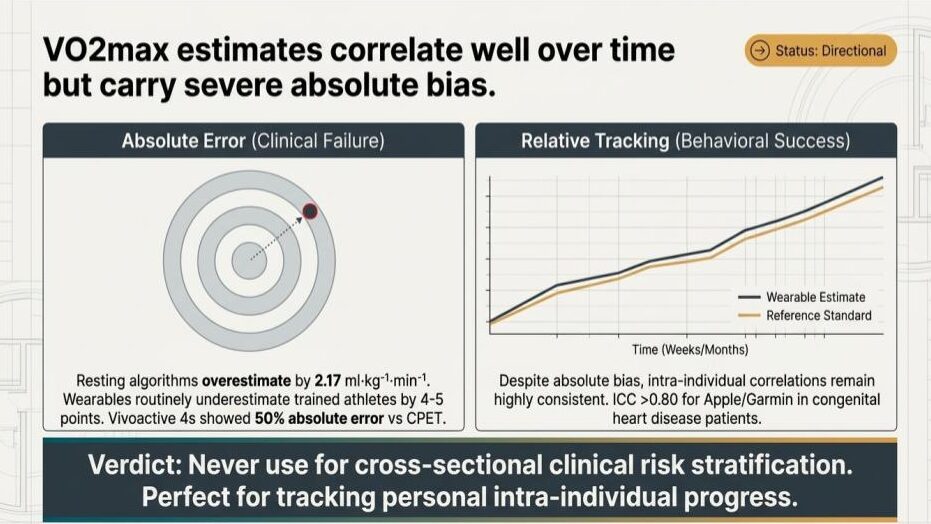

Here’s the uncomfortable truth from the validation literature: as a cross-sectional number, it’s often badly wrong. As a longitudinal signal, it’s surprisingly useful. Those two facts get conflated constantly, and the difference matters enormously depending on whether you’re a clinician, a coach, or just someone trying to get fitter.

The absolute number is the weak link

When researchers put consumer wearables next to cardiopulmonary exercise testing (CPET) — the laboratory gold standard with a metabolic cart, mask, and graded protocol — the gap is rarely subtle.

In a recent CONVALESCENCE sub-study of 203 middle-aged adults, a Garmin Vivoactive 4s overestimated CPET-measured VO2max by roughly 14 ml·kg⁻¹·min⁻¹, with limits of agreement spanning from about 2 to 27 ml·kg⁻¹·min⁻¹. Median absolute percentage error sat around 56–60%. The error wasn’t random; it scaled directly with how mismatched the participant’s actual fitness was from what an anthropometric equation would predict from their age, sex, height, and weight. In other words, the watch was essentially regressing toward a demographic average and calling it a measurement.

An Apple Watch Series 7 validation study found a mean absolute percentage error of about 16% against indirect calorimetry, with the device systematically overestimating low-fitness individuals and underestimating high-fitness ones — a compression toward the mean that shows up across nearly every device tested. The Garmin fēnix 6 fares better in athletic populations (MAPE around 7% in one validation), but even there the picture flips depending on the cohort.

The pattern is consistent: wearables tend to overestimate sedentary and clinical populations and underestimate trained athletes, sometimes by 4–5 ml·kg⁻¹·min⁻¹. For an elite runner whose true VO2max is 65, that’s a meaningful misclassification. For a cardiac rehab patient whose true VO2max is 18, an overestimate of 5 points isn’t a rounding error — it’s the difference between “concerning” and “reassuring.”

So why does anyone trust the trend line?

Because the within-person signal is a different beast entirely.

Even when absolute values are biased, the watch’s estimate tends to move up and down in lockstep with the reference standard. Intraclass correlation coefficients above 0.80 have been reported for Apple Watch and Garmin in specific clinical populations, including congenital heart disease patients. Pearson correlations in the 0.6–0.9 range against CPET show up across most validation studies, even when bias is severe.

Mechanically, this makes sense. The algorithms behind these estimates — Firstbeat for Garmin, Apple’s proprietary model, similar approaches at Fitbit and Whoop — are mostly inferring VO2max from the heart rate response to a given workload during outdoor activity. The absolute calibration is fragile because it leans on demographic priors, assumed maximum heart rates, and assumptions about effort. But the delta — how your heart rate response to running 10 km/h changes over six weeks of training — is a real physiological signal the watch can actually pick up.

That’s why the same device can be useless for risk stratification and useful for tracking. It’s measuring the right thing in the wrong units.

What this means for three different audiences

For clinicians, the implication is direct: a single VO2max readout from a consumer wearable should not be used to stratify cardiovascular risk, screen for poor cardiorespiratory fitness, or replace a CPET in any decision that actually matters. The bias is too large and too systematic to ignore, and the people whose readings are most wrong are precisely the people most likely to be in a clinical pathway — those at the extremes of fitness.

For researchers running observational or epidemiological work, wearable VO2max can be a reasonable change-score variable in a longitudinal design, particularly when participants serve as their own controls. As an outcome at a single time point compared between groups, it carries a confounding structure that’s hard to disentangle from the underlying demographic equations baked into the algorithm.

For users, the practical guidance is the one most often missed: ignore the absolute number, watch the trajectory. If your estimate climbed three points over a training block, you almost certainly got fitter. If it sits at 47 instead of the 51 you think you deserve, that gap tells you very little. The watch is a decent personal trainer and a poor diagnostician.

The broader lesson for consumer health tech

VO2max is a useful microcosm for almost every passive metric these devices generate — sleep stages, recovery scores, stress, HRV-derived training readiness. The validation literature tends to find the same shape: weak absolute accuracy against gold-standard references, much stronger intra-individual tracking. The mistake isn’t trusting the device; it’s trusting it for the wrong question.

The verdict on the slide that prompted this post puts it cleanly: never use these estimates for cross-sectional clinical risk stratification, but they’re well-suited to tracking personal intra-individual progress. That’s not a hedge — it’s two different tools sharing one number, and knowing which one you’re holding is most of the battle.

Stay updated on the latest health tech funding: https://newsletter.centralive.health/signup